Xiaomi has introduced its latest open-weight AI model, MiMo-V2.5, which promises a significant enhancement in agentic capabilities and multimodal understanding.

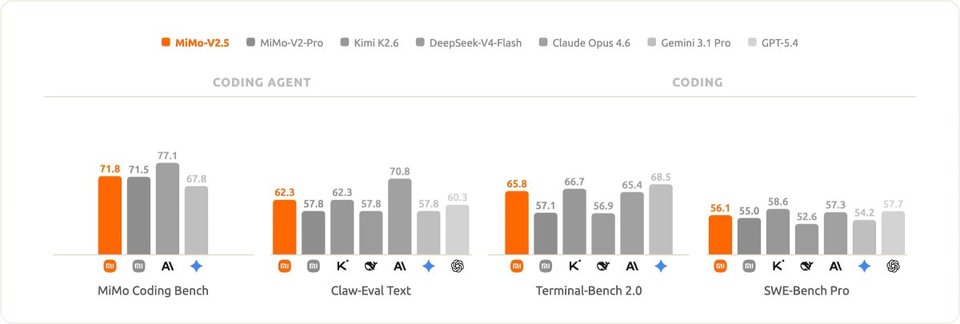

The company has released several benchmark results comparing MiMo-V2.5 to other recent models, including DeepSeek-V4, Kimi K2.6, Claude Opus 4.6, Gemini 3.1 Pro, and its predecessor, the MiMo-V2-Pro.

Xiaomi asserts that MiMo-V2.5 achieved top-tier performance on its proprietary agentic tasks benchmark. Notably, on the internal MiMo Coding Bench, the more compact V2.5 model performed comparably to the larger V2.5-Pro model at only half the cost. In evaluations of its image and video comprehension, MiMo-V2.5 matched the performance of several closed-source models, according to Xiaomi.

MiMo-V2.5 evaluated on coding and agentic tasks.

MiMo-V2.5 evaluated on coding and agentic tasks.The model has been trained on 48 trillion tokens and is inherently multimodal, accommodating text, image, and video data. Xiaomi has released two variants: MiMo-V2.5, featuring 310 billion total parameters (15 billion active), and MiMo-V2.5-Pro, with 1.02 trillion total parameters (42 billion active). Both versions support a context of up to 1 million tokens.

MiMo-V2.5 evaluated on image and video understanding

MiMo-V2.5 evaluated on image and video understandingYou can download the model from Hugging Face for local execution; however, this requires advanced hardware, such as a fully-equipped Mac Studio, as consumer-grade GPUs lack sufficient VRAM (including the Nvidia RTX 5090).

At present, you can explore Xiaomi MiMo-V2.5 in AI Studio (currently experiencing loading issues) or utilize it through the official API. Alternatively, as mentioned earlier, you have the option to download and run it locally if your hardware meets the requirements.